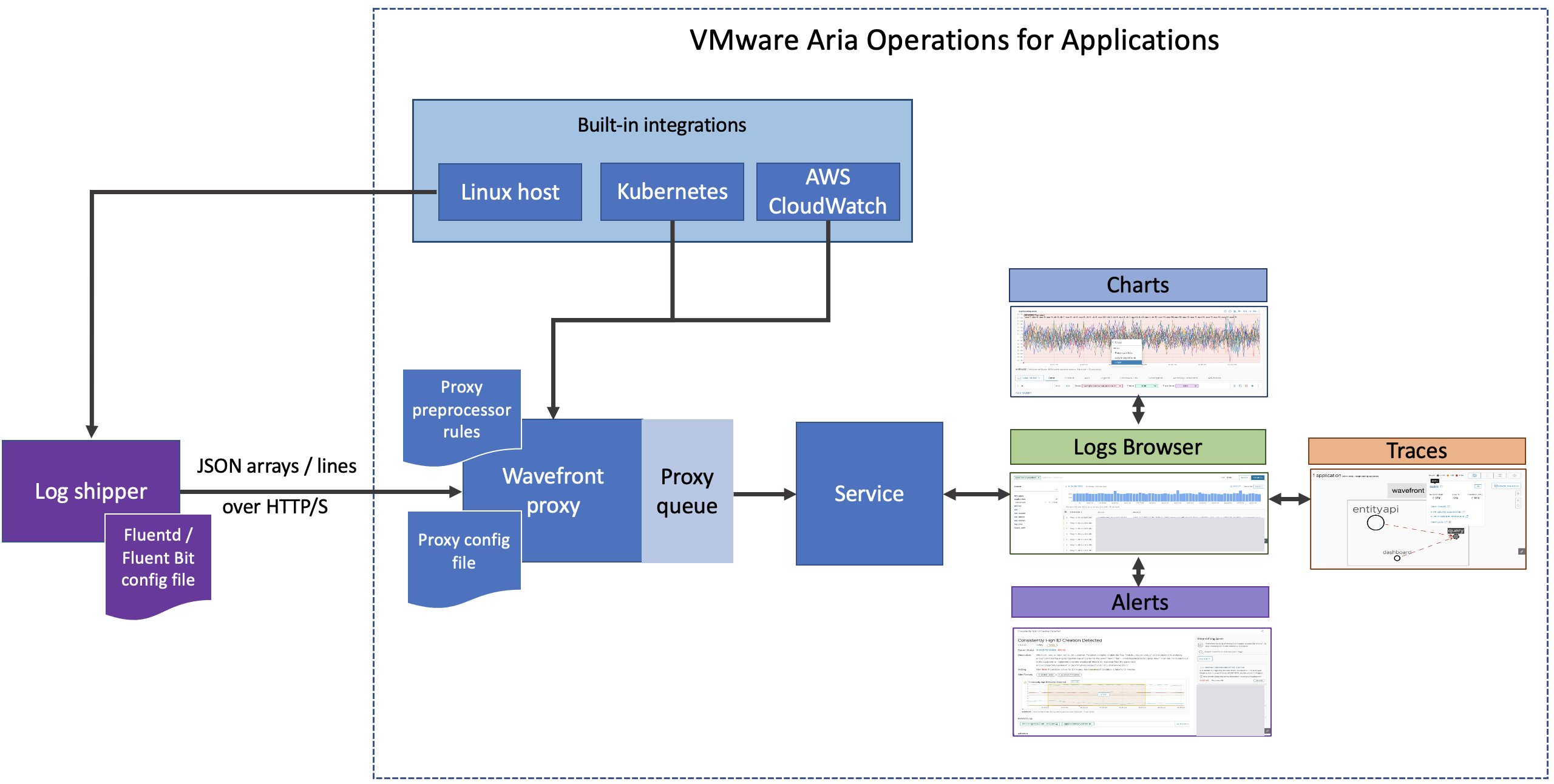

You can send logs to the Wavefront proxy from your log shipper or directly from your application. The Wavefront proxy sends the log data to our service.

Install the Wavefront Proxy

Our logging solution currently requires a Wavefront proxy and does not support direct ingestion. The Wavefront proxy accepts logs as JSON array and JSON lines payload over HTTP or HTTPS and forwards it to the service.

Proxy System Requirements:

|

Proxy Kubernetes Requirements:

|

To install and configure a new proxy:

- Select Browse > Proxies.

- Click Add new proxy and follow the instructions on the screen.

- Edit the

wavefront.conffile to open thepushListenerPortsto receive logs from the log shipper.

For example:- If you installed the proxy on Linux, Mac, or Windows, open the

wavefront.conffile, uncomment thepushListenerPortsconfiguration property, and save the file. The port is set to 2878 by default. - If you installed the proxy on Docker, the command you use opens the

pushListenerPortsand sets it to 2878.

- If you installed the proxy on Linux, Mac, or Windows, open the

- Optionally, uncomment or add other logs proxy configurations the

wavefront.conffile. - Optionally, configure preprocessor rules for logs in the

preprocessor_rules.yamlfile. - Start the proxy.

Option 1: Use Our Integrations

You can monitor your Kubernetes clusters or Linux hosts using our built-in integrations and send logs to our system.

- Linux host integration: Install the Wavefront proxy and configure the log shipper.

- Kubernetes integration: Enable logs while you set up the integration, generate the script, and run it on your Kubernetes cluster.

Note: Logs is not supported when you use OpenShift.

- AWS CloudWatch integration: If you have already configured the AWS CloudWatch integration, you can create an AWS lambda function to send logs to our service.

Option 2: Configure a Log Shipper

The log shipper sends your data to the Wavefront proxy. We support the Fluentd and Fluent Bit log shippers, which scrape and buffer your logs before sending them to the Wavefront proxy.

If you want to use a different log shipper, contact technical support.

Prerequisite:

Add the VMware domain (*.vmware.com) to the allowlist in your environment. Because our service uses a VMware log cluster, you need to add the VMware domain to your allow list to send log data successfully. If you want to narrow down the domain, contact your account representative.

Configure your log shipper:

-

Install the log shipper. For example, install Fluentd or install Fluent Bit.

-

Configure the log shipper to send data to the Wavefront proxy.

- Add the hostname of the host where the proxy runs.

- Add the

pushListenerPortsthat you configured in the proxy.

For example:

-

Edit the Fluentd configuration file (

fluent.conf) to send data to a proxy as follows:<match wf.**> @type copy <store> @type http endpoint http://<proxy url>:<proxy port (example:2878)>/logs?f=logs_json_arr open_timeout 2 json_array true <buffer> flush_interval 10s </buffer> </store> </match> -

Edit the Fluent Bit configuration file (

fluent-bit-<os>.conf) to send data to a proxy as follows:[OUTPUT] Name http Host <proxy url> Port <proxy port>(example: 2878) URI /logs?f=logs_json_lines Format json_lines

- As part of preprocessing, tag the logs with the application and service name to ensure you can drill down from traces to logs.

- (Optional) If you’re already using a logging solution, specify alternate strings for required and optional log attributes in the proxy configuration file. See also My Logging Solution Doesn’t Use the Default Attributes.